Cached at:

04/20/26, 02:53 PM

# What we’re optimizing ChatGPT for

Source: [https://openai.com/index/optimizing-chatgpt/](https://openai.com/index/optimizing-chatgpt/)

OpenAIWe design ChatGPT to help you make progress, learn something new, and solve problems\.

We build ChatGPT to help you thrive in all the ways you want\. To make progress, learn something new, or solve a problem — and then get back to your life\. Our goal isn’t to hold your attention, but to help you use it well\.

Instead of measuring success by time spent or clicks, we care more about whether you leave the product having done what you came for\.

We also pay attention to whether you return daily, weekly, or monthly, because that shows ChatGPT is useful enough to come back to\.

Our goals are aligned with yours\. If ChatGPT genuinely helps you, you’ll want it to do more for you and decide to subscribe for the long haul\.

This is what a helpful ChatGPT experience could look like:

- “Help me prepare for a tough conversation with my boss\.” ChatGPT tunes into what you need to feel at your best, with resources like practice scenarios or a tailored pep talk so you can walk in feeling grounded and confident\.

- “I need to understand my lab results\.” It explains the numbers and helps you ask the right questions of your doctor, so you and your doctor can personalize your care with more information\.

- “I’m feeling stuck—help me untangle my thoughts\.” It acts as a sounding board while empowering you with tools of thought so you can think more clearly\.

Often, less time in the product is a sign it worked\. With new capabilities like ChatGPT Agent, it can now help you achieve goals without being in the app at all—booking a doctor’s appointment, summarizing your inbox, or planning a birthday party\.

We don’t always get it right\. Earlier this year, an update made the model too agreeable, sometimes saying what sounded nice instead of what was actually helpful\. We[rolled it back](https://openai.com/index/sycophancy-in-gpt-4o/), changed how we use feedback, and are improving how we measure real\-world usefulness over the long term, not just whether you liked the answer in the moment\.

We also know that AI can feel more responsive and personal than prior technologies, especially for vulnerable individuals experiencing mental or emotional distress\. To us, helping you thrive means being there when you’re struggling, helping you stay in control of your time, and guiding—not deciding—when you face personal challenges\.

That’s why we’ve been working on the following changes to ChatGPT:

- **Supporting you when you’re struggling\.**ChatGPT is trained to respond with grounded honesty\. There have been instances where our 4o model fell short in recognizing signs of delusion or emotional dependency\. While rare, we're continuing to improve our models and are developing tools to better detect signs of mental or emotional distress so ChatGPT can respond appropriately and point people to evidence\-based resources when needed\.

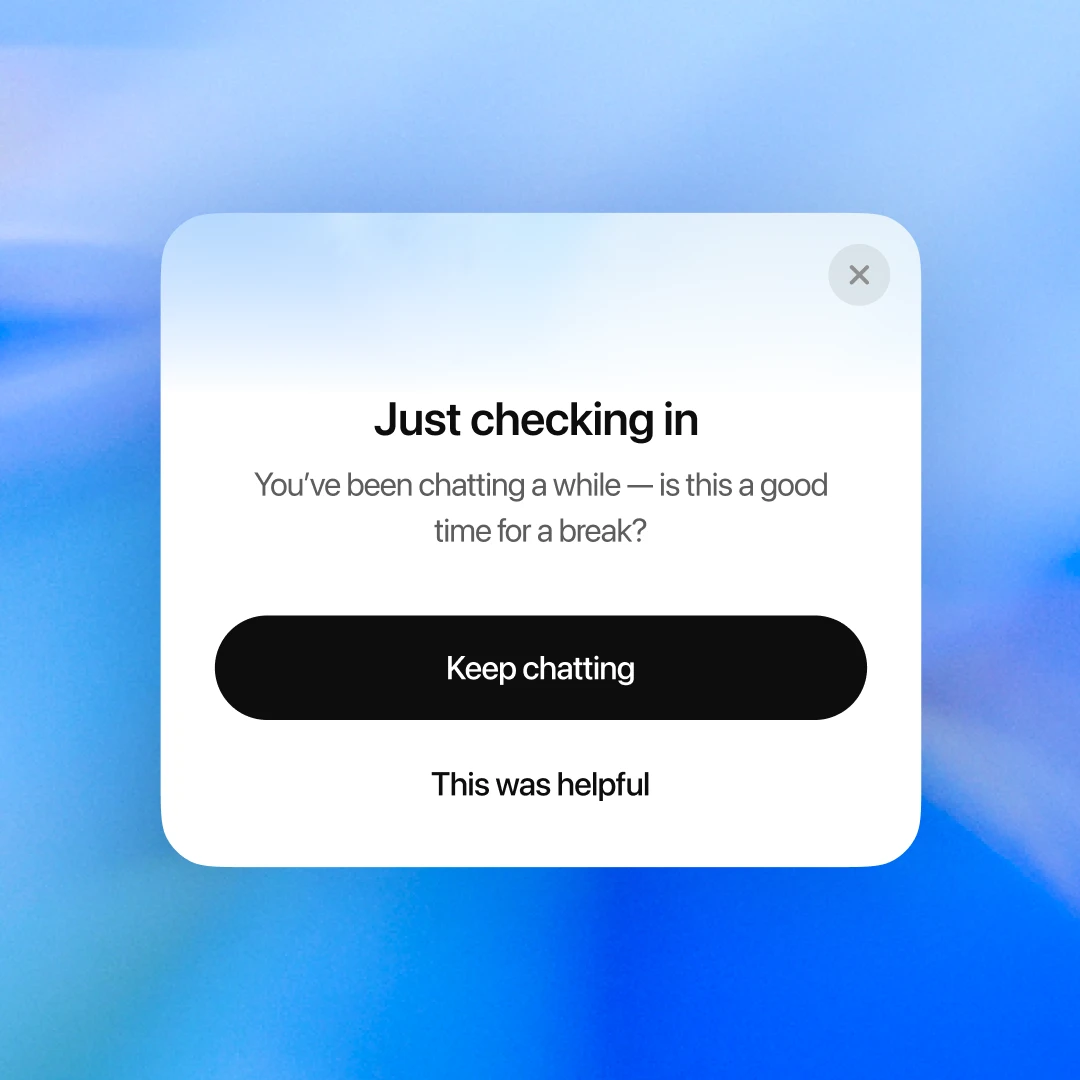

- **Keeping you in control of your time\.**Starting today, you’ll see gentle reminders during long sessions to encourage breaks\. We’ll keep tuning when and how they show up so they feel natural and helpful\.

- **Helping you solve personal challenges**\. When you ask something like “Should I break up with my boyfriend?” ChatGPT shouldn’t give you an answer\. It should help you think it through—asking questions, weighing pros and cons\. New behavior for high\-stakes personal decisions is rolling out soon\.

We’re working closely with experts to improve how ChatGPT responds in critical moments—for example, when someone shows signs of mental or emotional distress\.

- **Medical expertise\.**We worked with over 90 physicians across over 30 countries—psychiatrists, pediatricians, and general practitioners — to build custom rubrics for evaluating complex, multi\-turn conversations\.

- **Research collaboration\.**We're engaging human\-computer\-interaction \(HCI\) researchers and clinicians to give feedback on how we've identified concerning behaviors, refine our evaluation methods, and stress\-test our product safeguards\.

- **Advisory group\.**We’re convening an advisory group of experts in mental health, youth development, and HCI\. This group will help ensure our approach reflects the latest research and best practices\.

This work is ongoing, and we’ll share more as it progresses\.

Our goal to help you thrive won’t change\. Our approach will keep evolving as we learn from real\-world use\. We hold ourselves to one test: if someone we love turned to ChatGPT for support, would we feel reassured? Getting to an unequivocal “yes” is our work\.